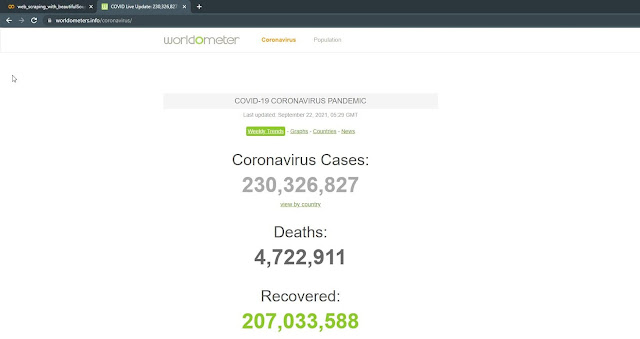

Implementing Web Scraping in Python with BeautifulSoup4: Scraping Corona Data from(https://www.worldometers.info/coronavirus/)

Hello Good People! Today we are going to scrap CryptoCurrency price from worldometers.info. For this we are going to make use of BeautifulSoup Library of python. We are going to implement our code in collab. Before jumping right into the implementation lets first discuss about web scraping, beautiful soup, and collab.

Web Scraping

"Data is the new oil", they say. With time the internet has become full of unstructured data that is accessible to all general public. We can search a website and find all kinds of data there. If we want to structure these data a specific tools and technique is required. This is where web scraping comes into play. Web scraping deals with extracting data from websites automatically with the help of web crawlers. Web Crawlers are the script that make connection with the world wide web using http protocol and allows us to extract data from the website in more systematic manner. There are many tools and technologies available for web scraping like Scraping bee, Scraper APi, Scraping bot etc. Some of these are paid tools. So why to spend when you can extract data from website on your own. For this you can make use of different libraries like selenium, boilerpride, nutch, scrapy, beautiful Soup available for various programming languages available. Among them Beautiful Soup is in my opinion the most easy to use and fast library for web scraping.

Beautiful Soup

Beautiful Soup is a python library to extract data from HTML and XML files. It works with any parser of your choice and provides idiomatic ways for navigating, filtering ,searching and modifying your parse tree. It is superfast. If you want to go through the basics of beautiful soup you can consult the documentation here. Beautiful Soup 4 is the latest version available of it so we are going to make use of that version.

Google Colab

Google colab or Colobaratory is the product of Google Research Team. It provides you an environment to write and run your python code online. It is more suited for data science and machine learning related tasks. I am using this environment because it will be easier for me to share my collab file with you guys. I will be including the link to the colab file we are going to implement today.

Now, Lets move on to the implementation part. There are various steps involved in implementing web scraping. Lets discuss them.

Step 1: Open new Notebook in google colab and lets install the required libraries and import them.

We are making use of two different libraries as follow:

- beautifulSoup4: for Scraping purpose

- requests: for handling http call

//Installing librarie

!pip install beautifulsoup4

!pip install requests

//Importing libraries

from bs4 import BeautifulSoup

import requests

import csv

Step 2: Find URL of the web page you want to scrape.

Load the web page as text using requests.get() function. then parse it using beautiful soup.

//loading webpage as text

oursource = requests.get

('https://www.worldometers.info/coronavirus/').text

soup = BeautifulSoup(oursource,'lxml')

Step 3: Find what you want to scrape from that web page.

You can do this by inspecting the web page you want and pointing out the data you want with the pointer. In our case we want to scrap the table with id="main_table_countries_today".

//extracting required table from webpage

ourBody = soup.find('table', id = 'main_table_countries_today')

Now we have the table, we only need the body of that table. so lets extract the body.

//Considering only body of our table

ourBody= ourBody.tbody

Step 4: let's open a CSV file and prepare our table where we will save our data.

Lets prepare our csv file for all the data we need.

//Creating a csv file to save extracted data

csv_file = open('corona_data.csv','w')

csv_writer = csv.writer(csv_file)

csv_writer.writerow(['Number','Country Name','Total Cases',

'New Cases','Total Deaths','New Deaths',

'Total Recovered','New Recovered']))

Step 5: Now that we have prepared everything lets extract data

What we want from that table is the data of each table data(td) from each row. So lets implement a for loop for that and write necessary data on our csv file before:

//Extracting data

for table in body.find_all('tr'):

var = []

for countryData in table.find_all('td'):

var.append(countryData.text)

csv_writer.writerow([var[0],var[1],var[2],

var[3],var[4],var[5],var[6],var[7]])

Finally, we have extracted the data we required and our final csv file looks like this.

Here is the link to the colab_file. You can explore there as well. Now that you are familiar with how web scraping works, why don't you try to extract data from any stock exchange from your country.

Post a Comment